Multimodal AI in 2026: Why Text, Image, Voice, and Video Models Are Merging

Author:sana

Released:February 11, 2026

AI no longer lives in chat boxes. In 2026, it sees, hears, and responds across formats in one flow. That shift isn’t cosmetic—it’s structural. Businesses using multimodal AI are already outperforming those stuck in text-only workflows.

If you’re building, marketing, or investing in AI-driven products, understanding this shift isn’t optional. It directly affects conversion rates, customer experience, and operational efficiency.

What Multimodal AI Means

Multimodal AI now refers to single models that natively process text, images, audio, and video together. Not stitched tools. Not plugins. One system.

Earlier systems bolted vision or speech onto text models. Today’s models are trained with shared embeddings and cross-modal attention, meaning all inputs live in the same “language space.”

This allows true reasoning across formats. For example, a model can watch a product demo video, read user comments, and generate a marketing script—all in one pass.

That’s the difference: not multimodal features, but multimodal thinking.

Why Everything Is Merging Now

1. The Real World Isn’t Text

Most business data isn’t neatly written. It’s:

- Call recordings

- Product images

- User-generated video

- Scanned documents

Text-only AI misses context. A customer saying “this doesn’t work” means something very different when paired with a frustrated tone or a broken product image.

Multimodal models reduce ambiguity and increase accuracy—especially in customer-facing systems. Teams are seeing fewer escalations simply because the AI understands the full situation earlier.

2. Architecture Finally Caught Up

Transformers evolved beyond text tokens. Now, images, audio, and video are tokenized into the same sequence space.

This enables cross-modal alignment—a model can connect what it hears with what it sees.

Leading systems like Google Gemini and OpenAI models are built this way from the ground up.

The result: fewer pipelines, fewer tools, and more consistent outputs. Instead of chaining five APIs, teams increasingly deploy one model that handles the full workflow.

3. Compute Made It Practical

Processing video and audio in real time used to be prohibitively expensive.

That’s changing fast:

- Larger context windows handle long video streams without truncation

- GPU/TPU optimization reduces latency for live interactions

- Distributed inference lowers cost per request at scale

Platforms like NVIDIA are accelerating multimodal workloads at production scale.

This shift matters because it unlocks continuous input, not just static uploads. Systems can now process ongoing voice conversations or live camera feeds without breaking context.

4. Users Expect More Than Chat

Chat-only interfaces now feel limited.

Users expect:

- Voice input (hands-free interaction while driving, working, or multitasking)

- Image understanding (upload a photo instead of describing it)

- Video generation (ads, tutorials, social content on demand)

Apps that don’t support these feel outdated.

This is why companies integrating multimodal AI see longer session times, higher retention, and better conversion—because friction drops at every step.

The Multimodal AI Leaders Shaping 2026

General-Purpose Leaders

- Google Gemini 3: Deep multimodal reasoning across text, video, and audio.

- OpenAI GPT-5 family: Unified generation and reasoning across formats.

- Anthropic Claude: Strong in multimodal workflows and agent-driven tasks (Anthropic).

These models are moving toward one system replacing multiple tools, especially in enterprise stacks where simplicity reduces both cost and failure points.

Specialized Systems

- Meta SAM 3focuses on advanced visual segmentation and understanding (Meta AI).

- ByteDance Seedance 2.0targets full-stack media generation—text, audio, and video in one pipeline (Seedance).

These systems push boundaries in specific domains but still align with the same trend: convergence.

The Bigger Pattern

We’re moving from:

- Tool-based AI → Generalist systems

- Feature add-ons → Core architecture

Multimodal is no longer premium. It’s becoming baseline.

How Multimodal AI Actually Works

At a high level, the process looks simple, but the mechanics behind it are powerful.

- Input fusion

Text, images, and audio are combined into a shared representation. For example, a product image, a user complaint, and a support call transcript can be encoded together.

- Cross-modal attention

The model connects signals across different formats. It can link a visual defect with a spoken complaint and a written return request.

- Flexible output generation

The model can respond in different formats depending on the use case, including text, speech, images, or video.

- Training with paired data

Training relies on large paired datasets such as image-text and video-audio. This is still a bottleneck because labeling, especially for high-quality video, is expensive.

Where Multimodal AI Is Driving Results

1. Content Creation

Marketing teams are compressing workflows dramatically.

Instead of separate tools, they now:

- Generate scripts based on product specs

- Produce voiceovers in multiple tones and languages

- Create visuals aligned with brand style

- Assemble full video ads with minimal manual editing

This enables rapid A/B testing. Instead of launching one campaign, teams can test dozens of variations in parallel—headline, visuals, voice, pacing—all generated automatically.

That speed directly translates to better ad performance and lower acquisition cost.

2. Enterprise Automation

Customer support becomes far more effective.

AI can:

- Analyze tone in voice calls to detect frustration early

- Read screenshots or photos submitted by users

- Combine both to produce more accurate responses

For example, instead of asking customers to describe a broken product, the system can analyze an uploaded image and instantly identify the issue.

Document processing also improves. Contracts, invoices, and scanned forms are interpreted alongside structured data, reducing manual review.

This shortens processing time and reduces human error in high-volume operations.

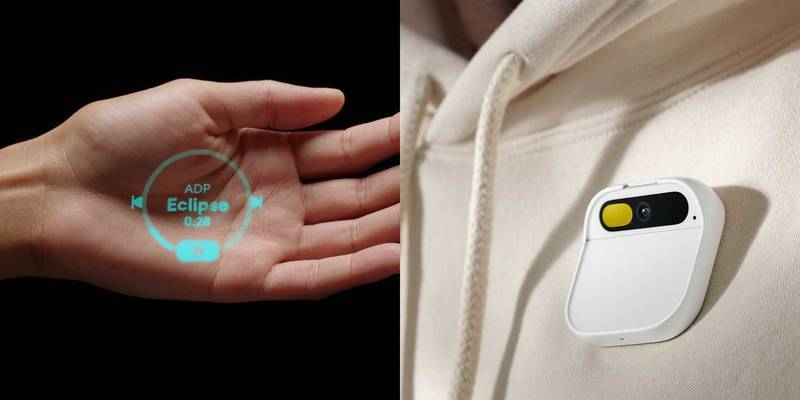

3. Human-Computer Interaction

Voice assistants are evolving into perceptual systems.

They don’t just respond—they observe and adapt.

For example, a field technician can show a machine via camera, describe the issue verbally, and get real-time guidance. The system can highlight components visually while explaining steps.

This reduces training time and improves accuracy in complex environments.

Compared to traditional interfaces, this is faster, more intuitive, and far closer to how humans communicate.

4. Emerging Use Cases

- Robotics: Combining vision and instructions to perform physical tasks with higher precision

- Healthcare: Integrating imaging, reports, and sensor data for more accurate diagnostics

- Education: Interactive video tutors that adjust explanations based on student responses

Organizations like Mayo Clinic are already exploring multimodal diagnostics, combining medical imaging with clinical notes and patient history.

These use cases are still developing, but they show where multimodal systems have the strongest long-term impact.

Breakthrough Trends in 2026

Native multimodality is the default. New models are built this way from day one, not retrofitted later.

Real-time video generation is improving fast. Faster diffusion models and optimized inference pipelines enable interactive media creation, not just batch rendering.

Agents + multimodal are converging. AI systems can now:

- Read screens and UI elements

- Interpret visuals and user behavior

- Take actions across software tools

This turns AI from a responder into an operator.

Industry estimates suggest around 30% of deployed AI systems now use multimodal inputs, particularly in customer support, marketing, and analytics workflows.

What’s Still Slowing Adoption

Despite progress, a few constraints remain.

- Data labeling across modalities is expensive and time-consuming, especially for video

- Video processing still carries high compute costs compared to text

- Real-time performance can introduce latency, especially on edge devices

- Evaluation is harder—accuracy must account for multiple input types simultaneously

These challenges don’t stop adoption, but they do influence where and how quickly companies deploy multimodal systems.

What This Means for Your Strategy

The shift is clear: text-first AI is fading.

Winning systems are:

- Perceptual (they see and hear)

- Contextual (they combine signals across formats)

- Action-oriented (they execute, not just suggest)

If you’re building products, prioritize multimodal input early, even if your first use case looks mostly text-based.

If you’re in marketing, multimodal generation can help you produce and test content at a much larger scale.

If you’re working on operations, look for workflows where combining voice, image, and text can reduce friction or improve accuracy.

Most of the real gains come from integration, not small experiments.

AI is starting to feel less like a tool and more like a collaborator that can understand context across formats. The gap between companies that use multimodal systems and those that don’t is likely to grow quickly.

At this point, the question is not whether to adopt it. It is how quickly you can fit it into what you already do.